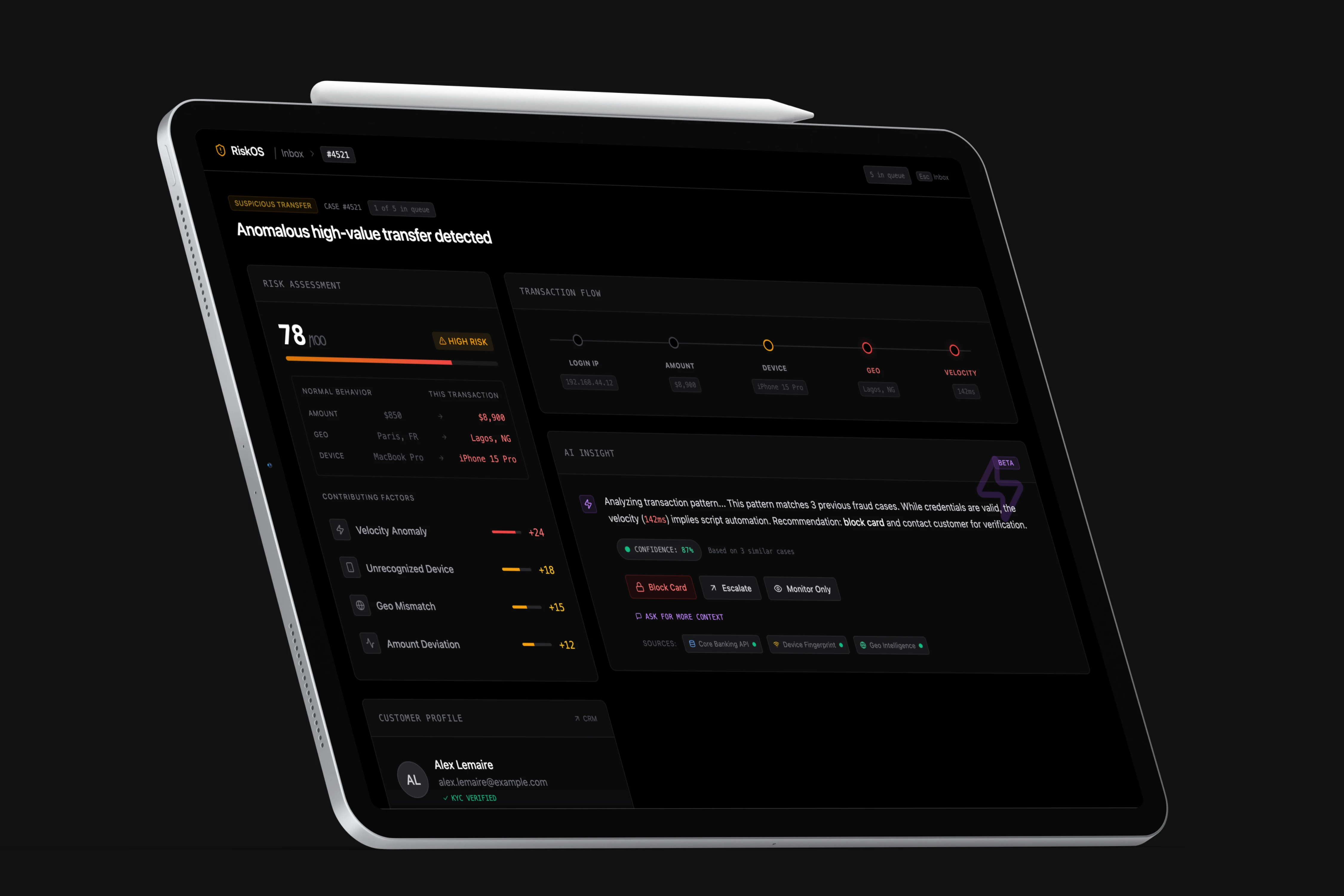

RiskOS

AI-Augmented Fraud Detection

Victor Soussan · Product Design

Context

Agentic interfaces raise a question I find genuinely interesting from a product design perspective: when an AI is part of a decision process, how do you keep the person making the call actively engaged? If the AI does too much, the human disengages. If it does too little to explain itself, trust erodes.

Fraud detection in European banking was a natural context to explore this. Analysts work under time pressure, 80% of their alerts turn out to be false alarms, and the final decision is always theirs.

RiskOS is the prototype I built to test ideas around that balance.

Initial observation

A fraud analyst at a European neobank handles 80 to 150 alerts per shift. Most are harmless. Every minute spent on a false alarm is a minute not available for a real case.

In many teams, the daily tool is still a spreadsheet and a set of fixed rules. No surrounding context, no sorting by relevance, no memory of previous cases.

Same data, two readings.

On the left, the alert feed as most institutions receive it: raw spreadsheet. On the right, the same information organized in RiskOS.

Design approach

The central question was whether the AI could handle the preparation work while the analyst retained full ownership of the decision.

Three principles guided the design:

- •The AI sets up context so the analyst can think clearly. It doesn't decide.

- •The reasoning is readable, not hidden behind a number.

- •When the analyst acts, something happens outside the tool, not just inside it.

Where RiskOS sits in the process.

A suspicious transaction hits the bank's automated rules. If flagged, the alert goes into a queue. The AI analyzes it. The analyst reviews and decides.

Triage under time pressure

The analyst opens their session. Five cases are waiting, sorted by risk level. They can filter by priority and track their progress with a live counter.

Triage view

Frustration: Without sorting, the analyst scrolls through the whole list looking for the urgent ones.

Benefit: Color-coded priorities and a live counter. Sorting takes a few seconds.

Making the AI reasoning readable

The AI writes its analysis in real time, word by word. Relevant details are highlighted as they appear. The data sources used light up progressively, and a confidence score indicates the level of certainty.

The action buttons remain hidden until the analysis is complete. The analyst reads the full reasoning before any decision is possible.

AI analysis, streaming

Frustration: Usually the analyst gets a risk number with no explanation.

Benefit: Here the AI writes out what it found, step by step. The analyst reads the reasoning, then decides.

Acting on a case

The analyst picks an action: block the card, pass the case to a senior, or keep it under watch. A confirmation screen recaps what happened. Then two things appear that usually stay invisible: the Slack message to the fraud team, and the SMS to the customer.

Confirmation and what happened next

Frustration: Usually the analyst acts and never sees the result.

Benefit: Every action has a visible trace: the Slack message, the customer SMS, the ticket.

Resolving a false alarm in eight seconds

A medium-risk alert arrives: score 45, a 450 euro payment. The AI reviews the transaction history and finds nothing unusual. The analyst confirms with one click.

False alarm, resolved

Processing a full queue

The analyst works through five cases in sequence. After each resolution, the next case loads automatically. At the end: five cases resolved, 92 seconds total.

Case flow and session recap

Observations

Two findings from this project that apply well beyond fraud detection.

Streaming the reasoning builds trust in a way that scores don't.

When the AI writes its analysis word by word, the analyst reads along and forms their own view at the same time. A confidence score after the fact just says "trust me" without showing the work.

Hiding the buttons until the analysis is done changes how people read.

The decision buttons only appear once the AI finishes writing. Under pressure, people click the first thing available. This small constraint gives the reasoning a chance to land.

I see the same dynamics in other contexts: compliance, medical triage, content moderation, incident response.

Stack

Working prototype. React 18, Vite, Tailwind CSS. Deployed on Vercel.